How to store secrets in Azure Databricks

Background

In Azure Databricks, we can write code to perform data transformation on data stored in various Azure Services, e.g. Azure Blob Storage, Azure Synapse. However, as other programs, sometimes, you want to protect credentials used in Azure Databricks, Azure Databricks provides a solid secret management approach to help you achieve that.

Steps

Prepare Databricks command-line interface (CLI) in Azure Cloud Shell

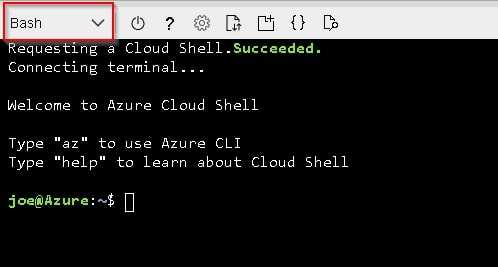

Configure your cloud shell environment

Open Cloud Shell & make sure you select “Bash” for the Cloud Shell Environment.

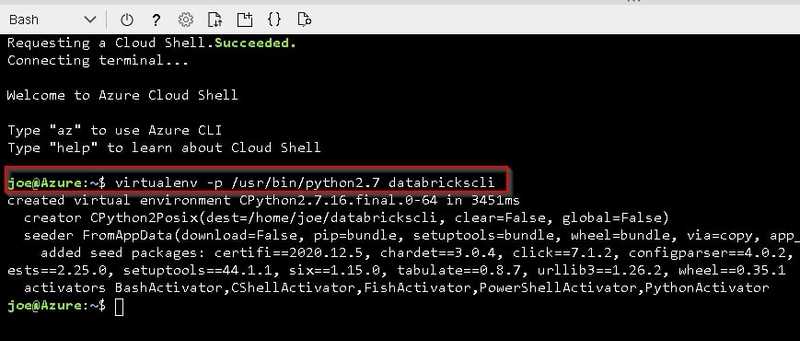

Set up Virtual Environment

Create Virtual Environment with below command.

# Bash

virtualenv -p /usr/bin/python2.7 databrickscliActivate your virtual environment

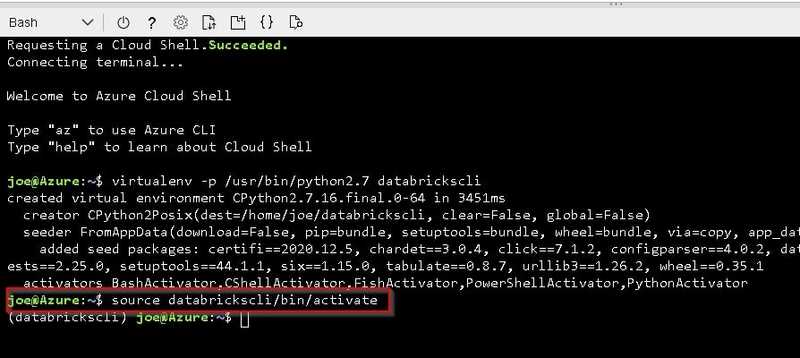

Activate your virtual environment with below command.

# Bash

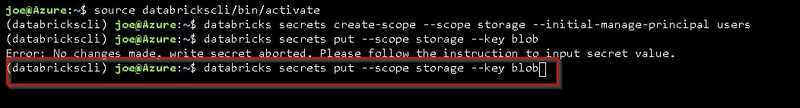

source databrickscli/bin/activateInstall Databricks CLI

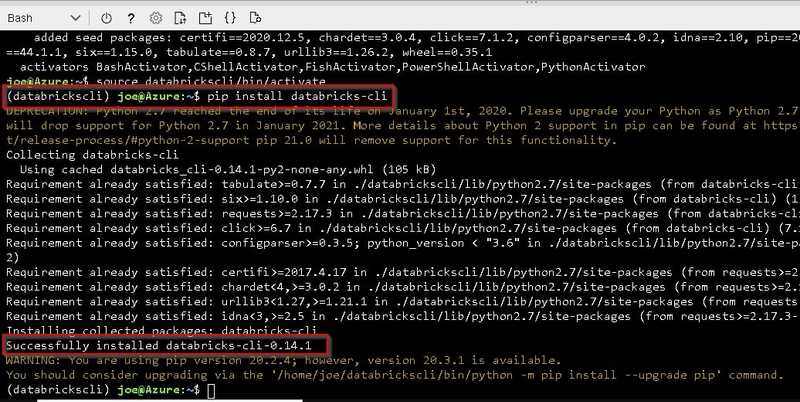

Install Databricks CLI with below command.

# Bash

pip install databricks-cliCreate secret in Azure Databricks

Set up authentication

Before you can create a secret, you need to authenticate as a user of the Azure Databricks, which requires your Azure Databrics workspace’s URL and a token

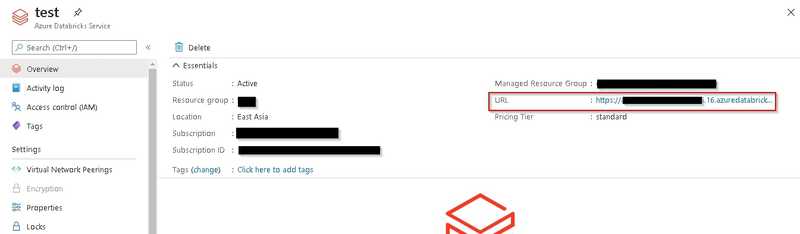

Get your Azure Databricks workspace’s URL

You can navigate to your Azure Databricks workspace and copy its URL.

Generate Access Token for your Azure Databricks workspace

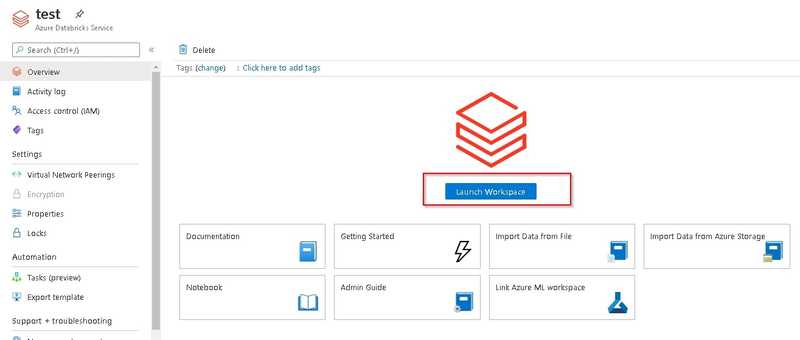

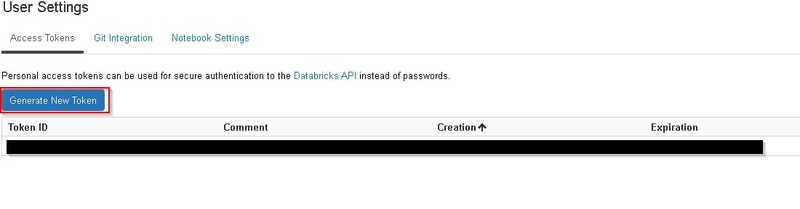

You can follow below steps to retrieve access token

- Launch Databricks workspace

- Click 'User Settings'

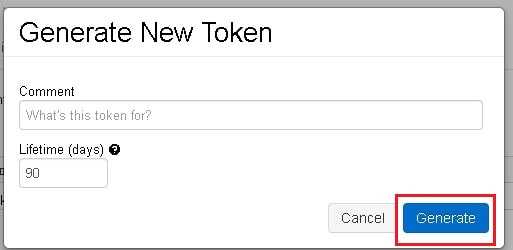

- Click 'Generate New Token'

- Configure access token & click 'Generate'

- Copy access token

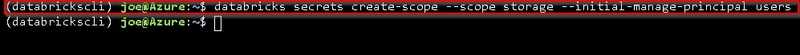

Create Secret Scope

After authentication, you need to first create a secret scope which you may group several secrets.

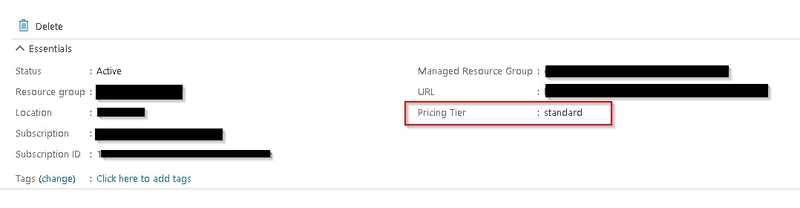

If your databricks is in Standard plan, you can only create secret scope which will be shared with other users in the same workspace.

# Bash

databricks secrets create-scope --scope <<scope>>

# Example

databricks secrets create-scope --scope storage --initial-manage-principal users # Standard Plan

databricks secrets create-scope --scope storage # Premium planCreate Secret

You can use below command to create secret under the specified scope.

# Bash

databricks secrets put --scope <<scope>> --key <<key name>>

databricks secrets put --scope storage --key blob #Example

Type command to launch secret editor

Type command to launch secret editor

Use Secret in Notebook

You can use secret by below command in notebook.

# Python

dbutils.secrets.get(scope=<<scope>>,key=<<key>>)

dbutils.secrets.get(scope=storage,key=blob) #ExampleBlog: https://joeho.xyz